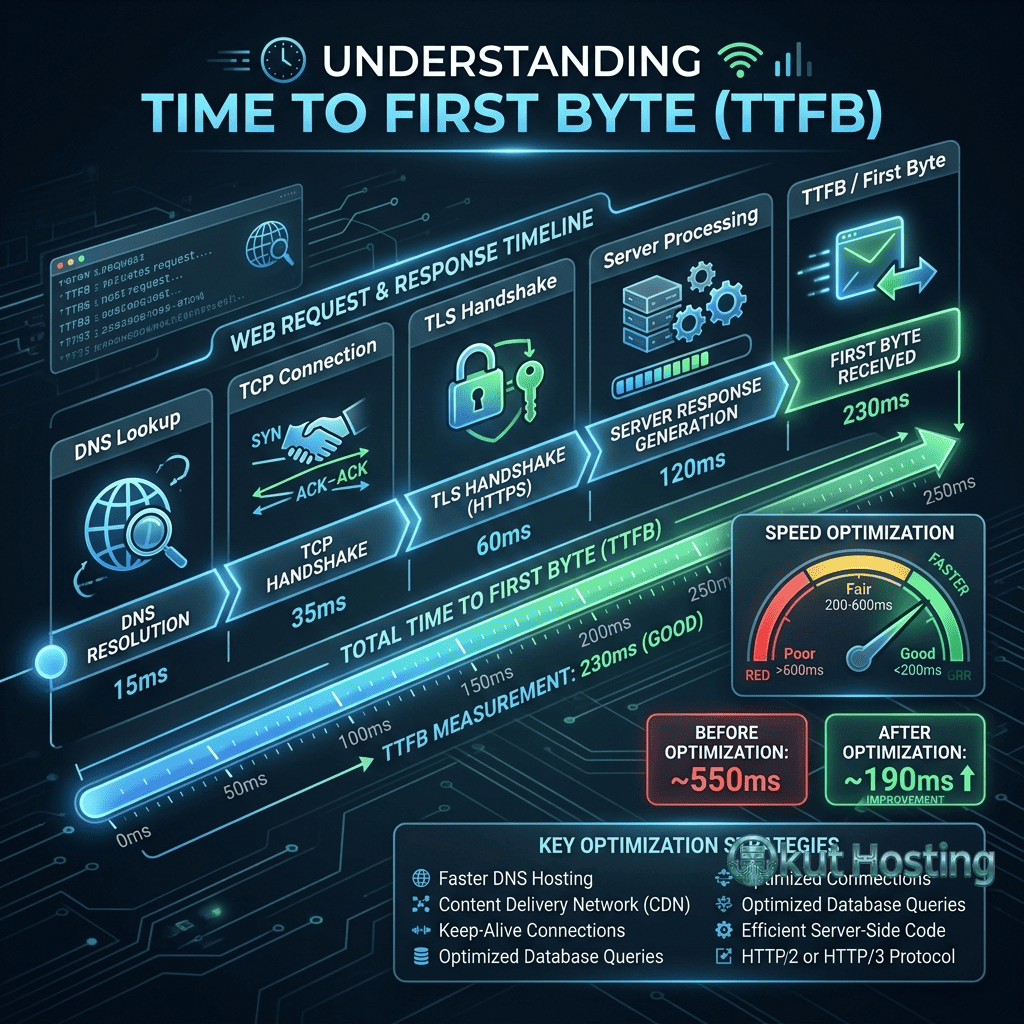

Time to First Byte (TTFB) measures the duration from when a client sends an HTTP request to when it receives the first byte of the response from the server. TTFB encompasses several key phases including DNS resolution, TCP connection establishment, TLS handshake (for HTTPS), and server processing time. As a critical web performance metric, TTFB directly influences Largest Contentful Paint (LCP) and overall page load experience. Google considers TTFB as part of its Core Web Vitals assessment, with a recommended threshold of 800 milliseconds or less for good performance. Effectively optimizing TTFB requires addressing both server-side processing efficiency and network delivery optimization.

This guide covers TTFB optimization comprehensively, examining the components that contribute to server response time, server-side optimization techniques, database performance, caching strategies, CDN implementation, WordPress-specific optimization, hosting infrastructure selection, and monitoring approaches. The information provides actionable guidance for reducing TTFB across all web platforms.

TTFB Components

TTFB includes several sequential phases that contribute to total response time. DNS lookup resolves the domain name to an IP address (typically 20-120ms, reduced through DNS caching and fast DNS providers). TCP connection establishes the transport connection between client and server (one round-trip, approximately equal to network latency). TLS handshake negotiates encryption for HTTPS connections (1-2 round trips for TLS 1.2, 1 round trip for TLS 1.3). Server processing time encompasses all server-side work including application logic, database queries, template rendering, and response generation. Each component offers optimization opportunities, though server processing time typically presents the single largest improvement potential for dynamic websites.

Server Processing Optimization

Application Code Efficiency

Server-side application code directly impacts processing time. Optimization approaches include: reducing unnecessary computation in request handling paths; eliminating redundant database queries through query optimization and batching; implementing efficient algorithms for data processing; caching computed results that don’t change between requests; profiling application code to identify performance bottlenecks; and reducing external API calls that add latency to page generation. Application profiling tools (New Relic, Datadog APM, Xdebug for PHP) identify specific functions and queries that consume the most processing time.

PHP and Server-Side Language Optimization

For PHP-based websites (WordPress, Laravel, Drupal), PHP version and configuration significantly impact TTFB. PHP 8.x provides measurable performance improvements over PHP 7.x through JIT compilation and core optimizations. OPcache (bytecode caching) eliminates PHP file parsing on every request, providing substantial performance improvement. PHP-FPM (FastCGI Process Manager) configuration including worker counts, process management, and memory limits affects concurrent request handling capacity. Keeping PHP updated to the latest stable version provides cumulative performance improvements from each release’s optimizations.

Database Optimization

Database queries are frequently the single largest contributor to server processing time for dynamic websites. Database optimization strategies include: adding indexes to frequently queried columns; optimizing slow queries identified through slow query logs; reducing the number of queries per page request through query batching and JOIN operations; implementing database query caching (MySQL query cache, Redis, Memcached); regularly maintaining database tables (optimizing, repairing fragmented tables); and removing unnecessary data (transients, revisions, spam comments in WordPress). Database performance monitoring identifies specific queries that contribute most to TTFB.

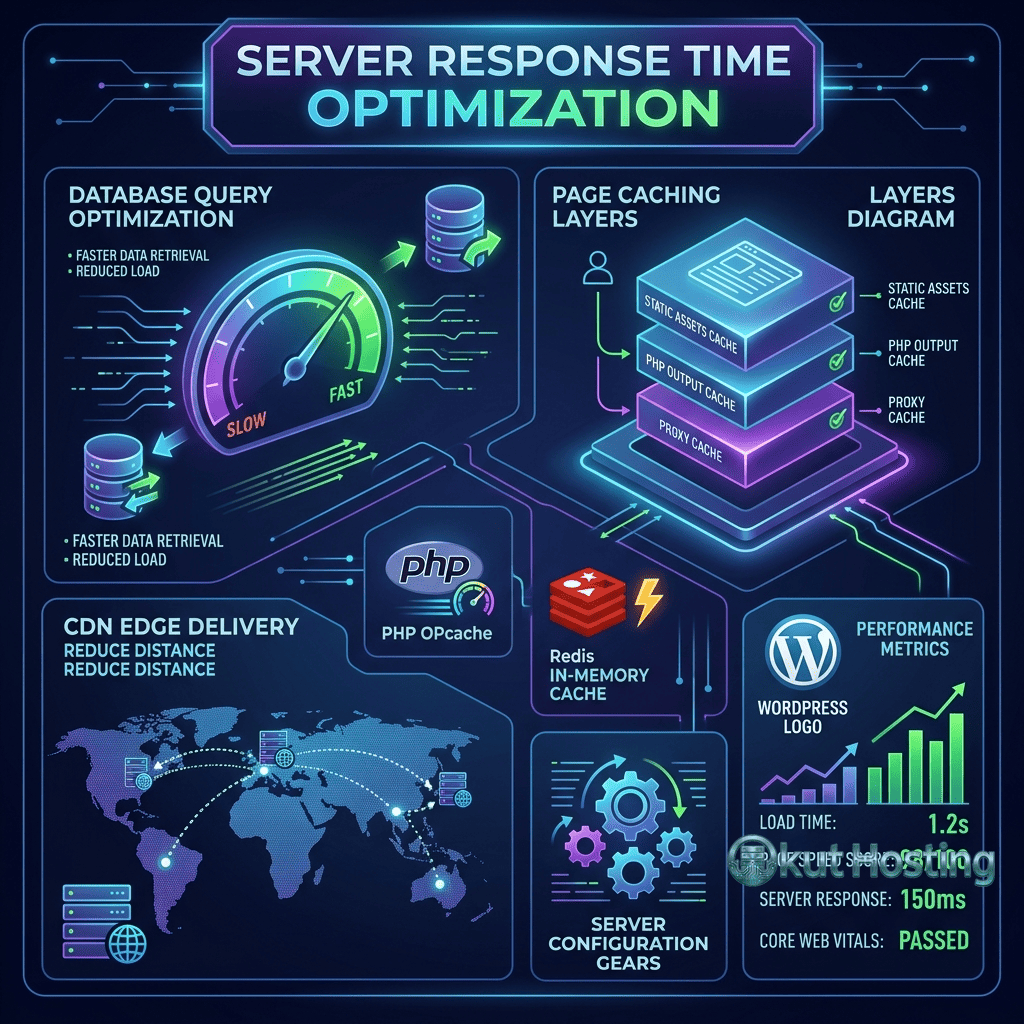

Page Caching

Page caching stores fully rendered HTML pages and serves them directly without executing application code or database queries for subsequent requests. Page caching typically reduces TTFB dramatically from hundreds of milliseconds to single-digit milliseconds for cached pages. Page caching implementations include: server-level caching (Varnish, Nginx FastCGI cache, LiteSpeed Cache); application-level caching (WordPress caching plugins, framework cache middleware); and CDN-level caching (caching full HTML pages at CDN edge locations). The combination of multiple caching layers provides the fastest possible TTFB by serving cached content from the closest available cache.

Object Caching

Object caching stores individual database query results or computed values in memory (Redis, Memcached), reducing database load for frequently accessed data. Unlike page caching which stores complete HTML output, object caching stores individual data objects that multiple pages may use. Object caching is particularly effective for: dynamic pages that cannot be fully page-cached (logged-in users, personalized content); frequently accessed database queries (navigation menus, sidebar widgets, configuration settings); and computed values that are expensive to generate but change infrequently.

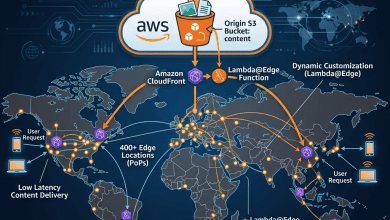

CDN and Edge Caching

CDN implementation reduces TTFB by serving cached content from edge servers geographically closer to visitors. For static assets (images, CSS, JavaScript), CDN caching eliminates the distance-related latency of serving from the origin server. For HTML pages, CDN edge caching (available through Cloudflare, CloudFront, and other CDN providers) serves cached page content from edge locations, providing the lowest possible TTFB for cacheable pages. The CDN’s proximity to visitors reduces the network component of TTFB, while origin caching reduces the processing component.

WordPress TTFB Optimization

WordPress sites commonly experience elevated TTFB due to plugin overhead, database query volume, and dynamic page generation. WordPress-specific TTFB optimization includes: enabling page caching through plugins (WP Super Cache, W3 Total Cache, WP Rocket); implementing object caching with Redis or Memcached; reducing active plugin count (each plugin adds processing overhead); using a lightweight, performance-optimized theme; enabling PHP OPcache for bytecode caching; upgrading to PHP 8.x for JIT compilation benefits; optimizing the WordPress database (removing transients, revisions, spam); and implementing CDN delivery for static assets and potentially full-page caching.

Hosting Infrastructure Impact

The hosting infrastructure directly and fundamentally determines baseline TTFB capabilities. Shared hosting typically provides the highest TTFB due to shared CPU, memory, and I/O resources. VPS hosting provides dedicated resources with more consistent TTFB. Managed WordPress hosting (Kinsta, Cloudways, WP Engine) provides optimized server configurations specifically tuned for WordPress TTFB. Cloud hosting (AWS, Google Cloud, Azure) provides scalable resources that maintain TTFB under varying load. The geographic location of the hosting server relative to the target audience also impacts TTFB — hosting in a data center close to the majority of visitors minimizes network latency.

DNS Optimization

DNS resolution contributes to TTFB for the initial connection to a domain. DNS optimization approaches include: using a fast DNS provider (Cloudflare DNS, Google Public DNS, AWS Route 53); enabling DNS prefetching for third-party domains through link rel=”dns-prefetch” hints; minimizing the number of unique domains on the page to reduce DNS lookups; and using DNS caching with appropriate TTL values. While DNS resolution time is typically a small component of total TTFB, it contributes to every initial page load and can add 50-200ms on slow DNS providers.

TLS Optimization

TLS handshake contributes to TTFB for HTTPS connections. TLS optimization approaches include: upgrading to TLS 1.3 which requires one round trip instead of TLS 1.2’s two round trips; enabling OCSP stapling to avoid separate certificate validation requests; using ECDSA certificates which are faster to verify than RSA certificates; enabling HTTP/2 or HTTP/3 for connection multiplexing; and enabling session resumption (TLS session tickets, session IDs) for faster reconnections. Modern server configurations with TLS 1.3 minimize the TLS contribution to TTFB.

TTFB Measurement Tools

Tools for measuring and monitoring TTFB include: browser DevTools Network panel (showing TTFB for each request as “Waiting” time); WebPageTest (providing detailed TTFB breakdown by component); PageSpeed Insights (reporting TTFB as part of Core Web Vitals assessment); curl command-line tool (providing TTFB measurement through timing output); and synthetic monitoring services (Pingdom, GTmetrix, Uptrends) for continuous TTFB monitoring from multiple locations. Testing TTFB from multiple geographic locations identifies whether TTFB issues are server-side (consistent across locations) or network-related (varying by location).

Server Software Selection

The web server software impacts baseline TTFB through different processing architectures. Nginx provides event-driven, non-blocking architecture with excellent performance for static content and reverse proxy scenarios. LiteSpeed provides built-in caching (LSCache) with exceptional PHP performance. Apache with mod_php provides traditional processing but higher overhead per request compared to Nginx or LiteSpeed. Caddy provides modern architecture with automatic HTTPS. For PHP-based websites, LiteSpeed and Nginx with PHP-FPM typically provide the lowest TTFB among commonly available server options.

Connection Keep-Alive

HTTP Keep-Alive maintains persistent connections between clients and servers, eliminating TCP and TLS handshake overhead for subsequent requests on the same connection. Keep-Alive is enabled by default in HTTP/1.1 and inherent in HTTP/2 and HTTP/3 multiplexing. Proper Keep-Alive configuration ensures that connections are maintained long enough for page resource loading while not consuming server resources indefinitely. The performance benefit of Keep-Alive is most significant for pages with many resources loaded from the same domain.

Early Hints (103)

HTTP 103 Early Hints enables the server to send preliminary response headers while the final response is still being generated. The browser uses these hints to begin loading critical resources (CSS, fonts, JavaScript) before the full HTML response is ready. Early Hints reduce effective TTFB for dependent resources by parallelizing resource discovery with server processing. CDN providers like Cloudflare provide automatic Early Hints support, analyzing previous responses to determine which resources to hint for future requests.

Compression

Server-side compression (Gzip, Brotli) reduces response size, effectively reducing TTFB by decreasing the time needed to transmit the response. Brotli compression provides approximately 15-25% better compression than Gzip for text-based content (HTML, CSS, JavaScript), though with higher CPU usage during compression. Pre-compressing static assets eliminates compression CPU overhead for frequently served files. Server configuration should enable compression for text-based content types while avoiding compression for already-compressed formats (JPEG, PNG, ZIP) where compression adds overhead without meaningful size reduction.

HTTP/2 and HTTP/3 Impact on TTFB

HTTP/2 multiplexing reduces effective TTFB for page resources by loading multiple assets over a single connection, eliminating the connection overhead that HTTP/1.1 required for each resource. HTTP/3 with QUIC further reduces TTFB through faster connection establishment (1 round trip vs 2-3 for HTTP/2 over TCP+TLS) and 0-RTT reconnection for returning visitors. Enabling HTTP/2 and HTTP/3 at the server or CDN level provides TTFB benefits without application code changes.

Geographic Server Placement

The physical distance between the server and visitors directly impacts the network component of TTFB. Each additional thousand miles of network distance adds approximately 10-15ms of round-trip latency. Strategies for minimizing geographic TTFB include: selecting a hosting data center closest to the majority of visitors; implementing CDN edge caching to serve content from the nearest edge location; using multiple origin servers in different regions with geographic DNS routing; and implementing edge computing to handle request processing closer to visitors.

Autoscaling and Load Management

TTFB increases when server resources are exhausted under high traffic load. Autoscaling infrastructure automatically provisions additional server resources when traffic exceeds capacity thresholds, maintaining consistent TTFB during traffic spikes. Cloud hosting platforms (AWS, Google Cloud, Azure) provide autoscaling capabilities that add compute instances when CPU, memory, or request queue metrics exceed defined thresholds. For predictable traffic patterns (marketing campaigns, seasonal peaks), pre-scaling infrastructure before anticipated traffic increases prevents TTFB degradation during high-load periods.

Content Delivery Architecture

The overall content delivery architecture significantly impacts TTFB. A well-designed architecture separates static asset delivery (handled by CDN) from dynamic content generation (handled by application servers), enables page caching at multiple levels (server, application, CDN), and implements object caching for frequently accessed data. This layered architecture ensures that the majority of requests are served from cache at the lowest possible latency, with origin server processing limited to truly dynamic content that cannot be cached.

Real User Monitoring for TTFB

Real User Monitoring (RUM) provides TTFB data from actual visitor experiences, capturing the full diversity of network conditions, geographic locations, and device types that synthetic testing cannot replicate. The Navigation Timing API provides programmatic access to TTFB measurements (performance.timing.responseStart – performance.timing.navigationStart). RUM data segmented by geography, device type, and network connection identifies specific user populations experiencing elevated TTFB, guiding targeted optimization for the most impacted visitor segments.

TTFB Budgets and Targets

Establishing TTFB performance budgets provides measurable targets for optimization efforts. Google recommends TTFB under 800ms for good Core Web Vitals performance, but competitive websites should target much lower values. Recommended TTFB targets include: under 200ms for cached pages (achievable with page caching and CDN); under 600ms for dynamic pages (achievable with optimized application code and object caching); and under 100ms for static assets served from CDN edge caches. Performance budgets should be enforced through automated monitoring and alerting.

Monitoring and Alerting

Continuous TTFB monitoring enables detecting performance degradation before it impacts user experience and search rankings. Monitoring approaches include: synthetic monitoring (scheduled tests from multiple locations) for consistent baseline measurement; real user monitoring (RUM) for actual visitor TTFB data; server-side monitoring (response time logging) for internal performance tracking; and alerting thresholds that notify administrators when TTFB exceeds acceptable levels. Correlating TTFB changes with server events (deployments, traffic spikes, database growth) identifies the root causes of performance changes.

Database Connection Pooling

Database connection establishment adds latency to each request that requires database access. Connection pooling maintains a pool of pre-established database connections that are reused across requests, eliminating connection establishment overhead. PHP-FPM with persistent connections provides basic connection reuse. External connection pooling solutions (ProxySQL for MySQL, PgBouncer for PostgreSQL) provide more sophisticated connection management. For WordPress sites with high traffic, connection pooling can reduce TTFB by 10-50ms per request by eliminating database connection establishment time.

Server-Side Rendering Optimization

For applications using server-side rendering (SSR), rendering time directly contributes to TTFB. SSR optimization approaches include: implementing component-level caching to avoid re-rendering unchanged components; using streaming SSR to send partial HTML responses before rendering completes; caching rendered pages at the application level (page caching); and optimizing rendering code to minimize CPU-intensive operations during page generation. For WordPress, server-side rendering optimization translates to theme optimization, reducing template complexity, and minimizing hook and filter execution during page rendering.

Reverse Proxy Caching

Reverse proxy servers (Varnish, Nginx as reverse proxy) cache responses in front of the application server, serving cached content at web server speed without invoking the application for cached requests. Varnish in particular is designed specifically for HTTP caching and provides extremely fast cached response delivery with sophisticated cache invalidation capabilities. Many managed hosting providers implement Varnish or Nginx reverse proxy caching as part of their infrastructure, providing server-level TTFB optimization that works alongside WordPress caching plugins.

TTFB Optimization Priority Order

When optimizing TTFB, prioritize actions by potential impact: first implement page caching (greatest single improvement for most sites); then optimize hosting infrastructure (appropriate server resources and geographic location); then implement CDN delivery for static assets and potentially cached HTML; then optimize database performance (indexes, query optimization, object caching); then fine-tune server configuration (PHP version, OPcache, web server software); and finally optimize network delivery (DNS provider, TLS configuration, HTTP/2/3). This priority order ensures maximum TTFB improvement from the earliest optimization efforts.

Summary

Server response time (TTFB) is a foundational performance metric that influences all subsequent page loading metrics and user experience. Effective TTFB optimization addresses multiple layers: server processing efficiency through code optimization, database performance, and caching; network delivery through CDN implementation and DNS optimization; protocol efficiency through TLS 1.3, HTTP/2/3, and connection management; and infrastructure selection through appropriate hosting and server software. WordPress sites benefit from specialized optimization through caching plugins, PHP updates, and managed hosting. Continuous and proactive TTFB monitoring ensures sustained performance as websites evolve and traffic patterns change.

Techniques and tools discussed in this guide reflect current web performance standards. Specific configurations vary by server software and hosting environment. Okut Hosting is an independent review platform with no affiliate relationships with any company mentioned in this article.

For related guides, see our Core Web Vitals guide, our browser caching guide, and our WordPress caching plugins guide.